The Intel Skull Canyon NUC6i7KYK mini-PC Review

by Ganesh T S on May 23, 2016 8:00 AM ESTHTPC Credentials

The higher TDP of the processor in Skull Canyon, combined with the new chassis design, makes the unit end up with a bit more noise compared to the traditional NUCs. It would be tempting to say that the extra EUs in the Iris Pro Graphics 580, combined with the eDRAM, would make GPU-intensive renderers such as madVR operate more effectively. That could be a bit true in part (though, madVR now has a DXVA2 option for certain scaling operations), but, the GPU still doesn't have full HEVC 10b decoding, or stable drivers for HEVC decoding on WIndows 10. In any case, it is still worthwhile to evaluate basic HTPC capabilities of the Skull Canyon NUC6i7KYK.

Refresh Rate Accurancy

Starting with Haswell, Intel, AMD and NVIDIA have been on par with respect to display refresh rate accuracy. The most important refresh rate for videophiles is obviously 23.976 Hz (the 23 Hz setting). As expected, the Intel NUC6i7KYK (Skull Canyon) has no trouble with refreshing the display appropriately in this setting.

The gallery below presents some of the other refresh rates that we tested out. The first statistic in madVR's OSD indicates the display refresh rate.

Network Streaming Efficiency

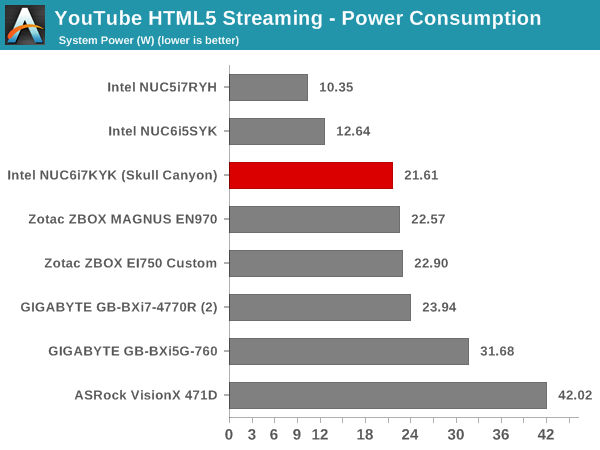

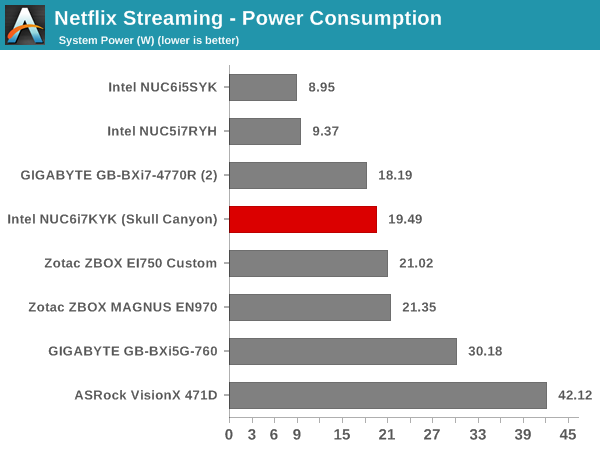

Evaluation of OTT playback efficiency was done by playing back our standard YouTube test stream and five minutes from our standard Netflix test title. Using HTML5, the YouTube stream plays back a 1080p H.264 encoding. Since YouTube now defaults to HTML5 for video playback, we have stopped evaluating Adobe Flash acceleration. Note that only NVIDIA exposes GPU and VPU loads separately. Both Intel and AMD bundle the decoder load along with the GPU load. The following two graphs show the power consumption at the wall for playback of the HTML5 stream in Mozilla Firefox (v 46.0.1).

GPU load was around 13.71% for the YouTube HTML5 stream and 0.02% for the steady state 6 Mbps Netflix streaming case. The power consumption of the GPU block was reported to be 0.71W for the YouTube HTML5 stream and 0.13W for Netflix.

Netflix streaming evaluation was done using the Windows 10 Netflix app. Manual stream selection is available (Ctrl-Alt-Shift-S) and debug information / statistics can also be viewed (Ctrl-Alt-Shift-D). Statistics collected for the YouTube streaming experiment were also collected here.

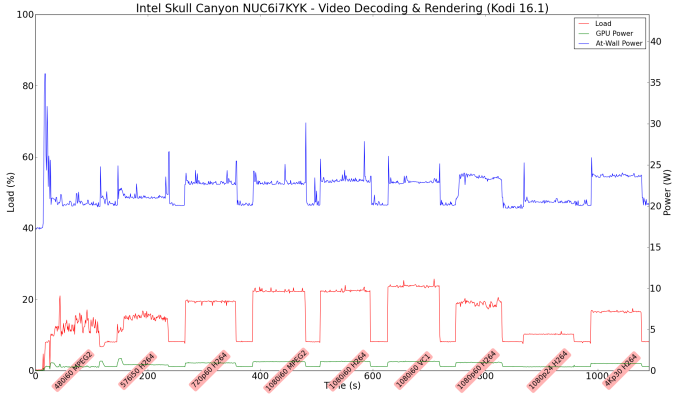

Decoding and Rendering Benchmarks

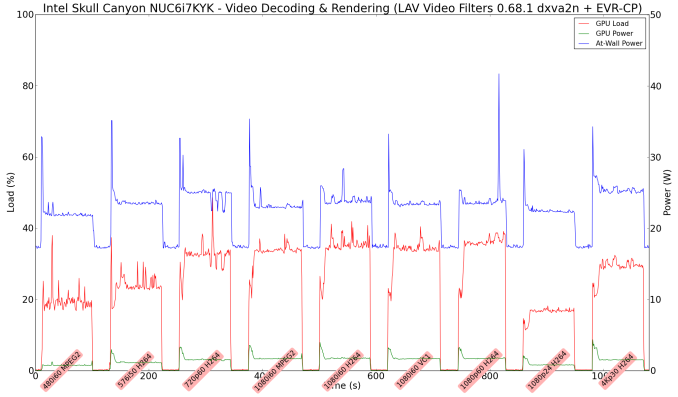

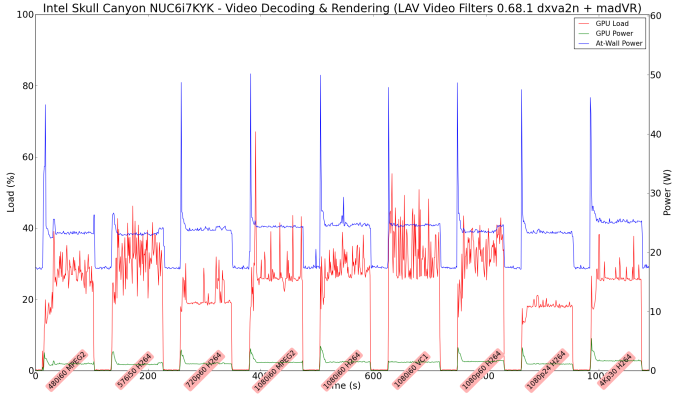

In order to evaluate local file playback, we concentrate on EVR-CP, madVR and Kodi. We already know that EVR works quite well even with the Intel IGP for our test streams. Under madVR, we used the DXVA2 scaling logic (as Intel's fixed-function scaling logic triggered via DXVA2 APIs is known to be quite effective). We used MPC-HC 1.7.10 x86 with LAV Filters 0.68.1 set as preferred in the options. In the second part, we used madVR 0.90.19.

In our earlier reviews, we focused on presenting the GPU loading and power consumption at the wall in a table (with problematic streams in bold). Starting with the Broadwell NUC review, we decided to represent the GPU load and power consumption in a graph with dual Y-axes. Nine different test streams of 90 seconds each were played back with a gap of 30 seconds between each of them. The characteristics of each stream are annotated at the bottom of the graph. Note that the GPU usage is graphed in red and needs to be considered against the left axis, while the at-wall power consumption is graphed in green and needs to be considered against the right axis.

Frame drops are evident whenever the GPU load consistently stays above the 85 - 90% mark. We did not hit that case with any of our test streams. Note that we have not moved to 4K officially for our HTPC evaluation. We did check out that HEVC 8b decoding works well (even 4Kp60 had no issues), but HEVC 10b hybrid decoding was a bit of a mess - some clips worked OK with heavy CPU usage, while other clips tended to result in a black screen (those clips didn't have any issues with playback using a GTX 1080).

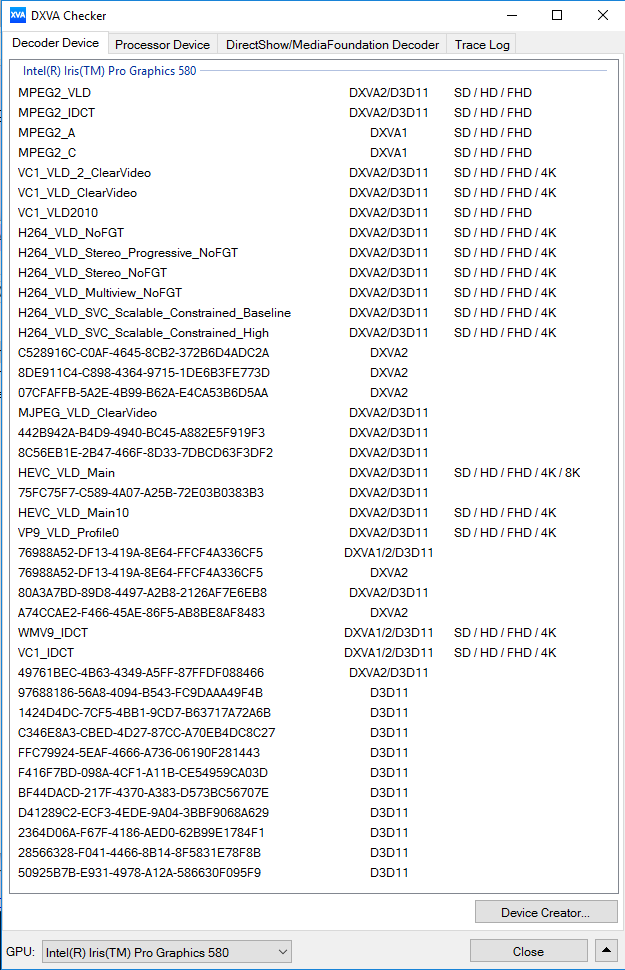

Moving on to the codec support, the Intel Iris Pro Graphics 580 is a known quantity with respect to the scope of supported hardware accelerated codecs. DXVA Checker serves as a confirmation for the features available in driver version 15.40.23.4444.

It must be remembered that the HEVC_VLD_Main10 DXVA profile noted above utilizes hybrid decoding with both CPU and GPU resources getting taxed.

On a generic note, while playing back 4K videos on a 1080p display, I noted that madVR with DXVA2 scaling was more power-efficient compared to using the EVR-CP renderer that MPC-HC uses by default.

133 Comments

View All Comments

utferris - Monday, May 23, 2016 - link

This can be better with ECC memory support. I just can not use any machine without ECC for work.ShieTar - Monday, May 23, 2016 - link

But a low-frequency consumer quad-core is fine? What exactly do you do at work?Gigaplex - Tuesday, May 24, 2016 - link

Sometimes reliability is more important than performance.close - Monday, May 23, 2016 - link

Guess ECC is not high on the list for potential NUC buyers, even if it's a Skull Canyon NUC. I think most people would rather go for better graphics than ECC.kgardas - Monday, May 23, 2016 - link

Indeed, the picture shows SO-DIMM ECC, but I highly doubt this is even supported since otherwise it's not Xeon nor Cxxx chipset...close - Tuesday, May 24, 2016 - link

http://ark.intel.com/search/advanced?ECCMemory=tru...tipoo - Monday, May 23, 2016 - link

What work do you want to do on a 45W mobile quad with (albeit high end) integrated graphics, that needs ECC?I wonder how that would work with the eDRAM anyways, the main memory being ECC, but not the eDRAM.

Samus - Monday, May 23, 2016 - link

All of the cache in Intel CPU's is ECC anyway. Chances of errors not being corrected by bad information pulled from system RAM is rare (16e^64) in consumer applications.ECC was more important when there was a small amount of system RAM, but these days with the amount of RAM available, ECC is not only less effective but less necessary.

I'm all for ECC memory in the server/mainframe space but for this application it is certainly an odd request. This is, for the most part, a laptop without a screen.

TeXWiller - Tuesday, May 24, 2016 - link

>ECC was more important when there was a small amount of system RAM,The larger the array the larger target it is for the radiation to hit. Laptops are often being used in high altitude environments with generally less shielding than the typical desktop or even a server so from that perspective ECC would seem beneficial basic feature. Then there are the supercomputers, a fun read: http://spectrum.ieee.org/computing/hardware/how-to...

BurntMyBacon - Tuesday, May 24, 2016 - link

@TeXWiller: "The larger the array the larger target it is for the radiation to hit."While this is true, transistor density has improved to the point that, despite having magnitudes more capacity, the physical array size is actually smaller. Of course the transistors are also more susceptible given the radiant energy is, relatively speaking, much greater compared to the energy in the transistor than it was when transistors were larger. There is also the consideration that as transistor density increases, it becomes less likely that radiation will strike the silicon (or other substrate), but miss the transistor. So we've marginally decreased the chance that radiation will hit the substrate, but significantly increased the change that any hit that does occur will be meaningful.